Your inbox has 500 new resumes. By the end of the day, you need a shortlist. Sound familiar?

If you’ve tried to solve this with the usual tools, you know the pain. Manual review is thorough, but it takes days. Old-school keyword filters are fast, but the shortlist is a mess, full of people who gamed the system and missing the ones who didn’t.

AI resume ranking promises to fix this. But it’s only a promise if you treat it as an operational workflow, not a magic filter you just switch on. The teams I see succeeding with this don’t just buy a tool. They build a system. It’s all about clear criteria going in, a ranked output coming out, human checkpoints at the right moments, and a feedback loop that makes the whole thing smarter over time.

This article gives you that system, step by step.

The reality check: what it takes to screen 500+ applications a day (and what AI can’t do for you)

Let’s get one thing straight. AI resume ranking just accelerates the work a human would do anyway: comparing candidates against a defined standard. It doesn’t replace the human judgment that defines that standard in the first place. If your job description is vague, your AI-generated shortlist will just be vague at a much bigger scale.

When AI ranking is the right tool vs. when your problem is upstream

AI ranking is your friend when you’re drowning in 500 applications and can’t find the good candidates in the noise. But if most of those 500 applications are completely irrelevant from the start, your problem isn’t screening speed. Your problem is your job post or your sourcing channel. Fix that first. AI ranking is the right tool for a volume problem, not a quality problem.

The 3 constraints you must manage: speed, quality, and fairness

Every high-volume screening process is a balancing act between these three. Speed without quality gets you a bad shortlist, fast. Quality without fairness opens you up to massive legal and reputational risk. This workflow is designed to optimize for all three. We’ll do it by automating the grunt work, putting human eyes where they matter, and auditing the results.

Step 0 — Define the job signals AI should rank on (so your shortlist isn’t garbage)

This is the most important step, and you have to do it before you touch a single resume. I mean it. Most of the time when I hear a hiring manager complain about a bad AI shortlist, it’s because they skipped this conversation. Do it now, when it’s cheap to fix.

Must-haves vs. nice-to-haves vs. deal-breakers (and why mixing them breaks ranking)

When a hiring manager tells you everything is a “requirement,” the AI has no way to tell what’s actually important. You get a ranked list where every candidate scores between 85 and 89, which is completely useless.

You need to force the conversation and classify every criterion into one of three buckets:

- Deal-breakers (knockouts): If a candidate is missing one of these, the review is over. Think specific licenses, work authorization, or minimum years in a specific domain.

- Must-haves: These are the core requirements for someone to do the job. The AI should weight these heavily.

- Nice-to-haves: These are the differentiators that separate a good candidate from a great one. They should lift a score, not carry it.

Put this in a simple table. This forces the hard conversation with the hiring manager early.

Translate the role into “evidence signals” (what the resume must demonstrate)

Vague requirements like “strong communication skills” are not rankable signals. What does that even look like? For an AI to do its job, you have to tell it what to look for.

For each must-have, ask: What’s the evidence on a resume that a candidate actually has this skill? An engineering role needing “backend experience” turns into: evidence of building REST APIs, specific languages like Python or Go, or projects involving system design. A sales role needing “quota attainment” turns into: revenue numbers, average deal sizes, or territory management experience. These observable signals are what a good AI can actually score against.

Role-specific tuning examples and seniority differences

One size does not fit all. You’ll need to tune your signals for every role. Here’s a pattern I use as a starting point:

| Role | Emphasize | De-emphasize |

|---|---|---|

| Engineering | Technical depth, project outcomes, stack specifics | School prestige, total years of experience (relevant experience is what matters) |

| Sales | Quota data, deal scale, industry vertical match | Job title (titles are all over the place) |

| Ops/Logistics | Process ownership, tool stack, measurable throughput | Formal credentials (less common in ops) |

Seniority changes the signals too. For senior roles, you’re looking for evidence of scope and decision-making, not just task completion. For junior roles, you might weigh potential, like adjacent skills or relevant coursework. If you get this wrong, you’ll filter out great senior candidates who don’t write resumes like they’re fresh out of college.

Step 1 — Build a high-volume intake pipeline (so 500 resumes don’t become 500 data problems)

Clean data in, reliable rankings out. It’s that simple. Before any scoring happens, your resumes need to be turned into structured data.

Standardize inputs: parsing/normalization and why formatting variance matters

Resumes come in every format imaginable. PDFs, Word docs, scanned images. An AI that tries to rank raw files is just guessing. A resume parser is the tool that turns that mess into structured fields like work history, skills, and education. That normalization is what lets you compare 500 candidates consistently.

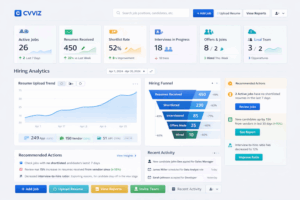

A good parser, like the one in CVViZ, handles this extraction step automatically. It pulls the key data into a structured format that’s ready for ranking. If you already have an ATS, you can often plug in a parsing API to handle this without replacing your whole system.

De-duplication and candidate identity hygiene

When you’re hiring at scale, the same person often applies through multiple channels. Without good deduplication, you end up reviewing the same candidate twice, messing up your metrics, and creating a confusing experience. A good intake flow, like the one in CVViZ, detects duplicates so your ranked list reflects unique people, not just unique applications.

Multi-language/international resumes: decide upfront how you’ll handle them

If you’re hiring globally, figure this out before you start. Your options are basically: (1) require English-language resumes, which is clear and fair if you state it upfront; (2) use a tool with multilingual support and spot-check the results; or (3) route non-English resumes to a human reviewer. Just don’t run them through an English-only parser and trust the score. You will accidentally throw away great candidates.

Step 2 — Run the 500+ daily workflow: rank, batch-review, and move candidates forward fast

Okay, this is where the playbook runs. Treat this as a focused, time-boxed activity, not a casual scroll through profiles.

Generate the ranked queue: what “contextual/semantic” ranking changes vs. keyword filtering

There’s a huge difference here. Keyword filtering asks, “Does this resume contain the word ‘CRM’?” Semantic ranking asks, “Does this resume demonstrate experience managing customer relationships?” A candidate who wrote about “managing revenue operations” but never typed “CRM” shows up in a semantic search. With keywords, they’re invisible.

If you want a deeper explanation of how this works in practice, semantic search is the core concept behind contextual matching.

Tools like CVViZ’s AI Resume Screening use this contextual approach. The output is a queue ranked by actual fit, not by who knew the magic words to use. But remember, a rank is a signal for priority, not a final decision. A score of 87 just means “look at this person first.”

Set triage thresholds (Top / Maybe / No) and a QA sampling method

Before you even look at the list, define your triage bands. Something like this:

- Top (top 10-15%): These get an immediate, full review by a human recruiter.

- Maybe (next 20-30%): These are borderline. Worth a quick scan if your “Top” bucket doesn’t yield enough prospects.

- No (the rest): Automatically dispositioned.

For quality assurance, pull a random 5% sample from the “No” pile every week and have a human review them. This is how you catch systematic errors before they become a big problem.

Batch-review technique: how to review 100+ “Top/Maybe” efficiently

Don’t open resumes one by one. That way lies madness. Work in structured batches.

- Start with your ranked “Top” list. Spend no more than 45-60 seconds on each resume. You’re just confirming the AI’s read.

- As you scan, flag candidates who look promising. Don’t stop to make a decision, just flag.

- After the first pass, go back and do a second, closer review on only the flagged resumes.

- Move the confirmed good candidates to the next step before you even think about looking at the “Maybe” pile.

This keeps you focused on the best prospects and prevents you from getting lost in the weeds.

Fast handoff: outreach, next-step scheduling, and keeping candidates warm

The fastest shortlist on earth is worthless if your top candidates go cold while waiting for you to email them. Aim for same-day or next-morning outreach for your top band. Use pre-written templates to get this done in minutes, not days.

Step 3 — Automate the “everything after ranking” to protect throughput

Congratulations, you’ve solved the ranking bottleneck. Your new bottleneck is now everything that happens after ranking, like sending emails and scheduling interviews.

Rules/triggers for immediate responses and stage transitions

Set up automation rules that trigger when a candidate’s status changes. When you move someone to “Top,” an acknowledgment email should go out automatically. When a hiring manager gives a thumbs-up, the scheduling link should be sent. A good system like CVViZ supports this with workflow automation, so recruiters can stop acting as manual relay switches.

Pre-screening questions to reduce recruiter time (without over-filtering)

Use pre-screening questions for hard knockouts only: work authorization, salary expectations, etc. But be careful. Every question you add will cause some people to drop off. Stick to 2-3 questions max and make them simple yes/no.

Candidate communication at scale (templates, bulk emails, status updates)

At this volume, candidate experience dies without automation. People who hear nothing assume they were rejected. Use bulk email tools and templates to keep everyone in the loop. A quick “thanks but no thanks” email or a status update for active candidates makes a huge difference.

Step 4 — Reduce bias and increase trust: practical governance for AI screening

This isn’t an optional step. This is what separates a defensible, professional process from a future lawsuit.

Where bias enters (job criteria, training data/feedback, proxies) and how to block it

Bias creeps in from three places. First, your criteria. If your “must-haves” happen to correlate with demographic proxies (like specific elite schools or certain zip codes), the AI will learn that bias. Second, your feedback. If the humans approving candidates are biased, the AI will learn from those decisions and amplify the bias. Third, the tool itself. If it was trained on resumes from a narrow group, it might not read others accurately.

Here are the practical blocks: review your criteria for proxies before you start, anonymize resumes during the initial ranking if your tool allows it, and regularly check your pass-through rates by demographic groups.

Add “human-in-control” checkpoints and override rules

A human must always review the “Top” candidates. Period. Recruiters need the ability to override any AI ranking, and those overrides should be logged. I also recommend an escalation rule: if a hiring manager disagrees with more than 10% of the AI’s top picks, it’s a signal to review the scoring criteria, not to tell the manager they’re wrong.

Documentation and audit readiness (what to record, how to explain decisions)

You need a paper trail. For every job, record the criteria you used, which candidates were reviewed by whom, and any overrides. If anyone ever asks why a candidate was declined, your answer can’t be “the AI said so.” It has to be based on the documented, job-related criteria.

Step 5 — Measure, troubleshoot, and improve ranking over time (the feedback loop)

An AI ranking system that never gets feedback is just a fancy, static filter. The feedback loop is what builds trust and makes the tool better over time.

The KPI set for high-volume screening (speed, funnel health, quality signals)

You can’t improve what you don’t measure. Track these from day one:

- Time-to-shortlist: How fast are you getting from application to shortlist?

- Pass-through rate by stage: What percentage of “Top” candidates make it to a phone screen? To an interview? To an offer?

- False negative rate (estimated): From your weekly QA sample, how many good people are you missing?

- Hiring manager satisfaction: Are managers happy with the shortlists? Just ask them.

- Offer acceptance rate: If great candidates are dropping out late in the process, your speed might still be the problem.

If you want a broader menu of metrics beyond this shortlist, Recruitment Analytics – Important Metrics breaks down the most useful measures to track.

Recruitment analytics tools, like those in CVViZ, can track most of this for you, making it easy to see where things are working and where they are getting stuck.

How to feed interview/hiring manager outcomes back into ranking refinement

Close the loop. Every couple of weeks, look at who got interviewed and who got rejected after an interview. Compare that to their original AI rank. If you see a pattern, like great interviewees who were ranked in the “Maybe” pile, it means your criteria are wrong. Figure out which signal you were missing and adjust the weights. This review can turn a decent AI tool into an indispensable one in a few months.

Troubleshooting guide: when rankings look wrong

| Symptom | Likely Cause | Fix |

|---|---|---|

| Strong candidates buried in “No” | Criteria too narrow or keyword-focused | Broaden must-have definitions; add semantic signals |

| “Top” band full of poor fits | Nice-to-haves are weighted too heavily | Re-weight criteria; make your must-haves stricter |

| Same candidates ranking top repeatedly | Parsing issue or lack of variety in your applicants | Check parser output; check your sourcing channels |

| International candidates missing | The parser is struggling with non-English text | Route them to a human or use a multilingual parser |

| Rankings identical across many candidates | Criteria aren’t specific enough | Add more role-specific evidence signals |

Choosing an AI resume ranking tool for 500+/day: evaluation criteria and vendor questions

Must-have capabilities for high volume (ranking quality, automation, integrations, reporting)

At this scale, you need an enterprise-grade tool. It has to handle the volume without slowing down, offer robust automation rules, integrate with your existing systems (or provide a good API), and give you reports that actually measure funnel health, not just vanity metrics.

If you’re evaluating whether an ATS is the right backbone for this kind of workflow, How ATS Can Make Candidate Screening Faster and Smarter lays out the core capabilities to look for.

Questions to ask vendors about scoring, explainability, bias controls, and integrations

Before you sign any contract, ask these direct questions:

- How does your model explain why a candidate got a certain score?

- What bias auditing features are built in? Can I see pass-through rates by demographic?

- How do you handle resumes that aren’t in English?

- If my job criteria change, how quickly does the ranking update?

- Can your tool plug into my current ATS, or do I have to rip and replace everything?

Their answers will tell you if they built a tool for real-world, high-volume recruiting or just a demo.

Put AI resume ranking into a workflow (not a black box)

AI resume ranking doesn’t make high-volume hiring easy. It makes it manageable. The teams that win with it treat it like any other operational process they need to configure, monitor, and continuously improve. It’s about defining criteria, cleaning your data, keeping humans in the loop, and using feedback to get smarter.

If you want a more detailed breakdown of the benefits and tradeoffs, AI for Resume Screening is a useful companion read alongside this workflow.

CVViZ brings AI screening, ranking, workflow automation, and analytics together on one platform. It’s built to support the kind of high-control, high-volume process this article describes. If you’re ready to stop drowning in resumes and start running a repeatable, scalable workflow, it’s worth seeing how it works in practice.